Overview

This article will cover a lot of tips that can be used to speed up any 3D graphics system.

Bottlenecks

To understand why something makes your system faster, it is best to understand what makes it slow. An accurate table of which have the largest impacts can’t really be made because it depends not only on the API but also other factors such as how complex lighting is. I present the major bottlenecks here in the most generalized order.

- Shader swapping: Swapping shaders has a huge impact on older API’s. Each time a shader is set, it must determine how to transform the incoming vertices again and again. DirectX 10 and DirectX 11 require a shader signature when a vertex buffer is created, and the transforming routine is determined only once, so swapping shaders is not so rough. This allows the #2 bottleneck—swapping textures—to be roughly even in terms of overhead, perhaps even worse.

- Texture swapping: Uploading textures is slow. Sometimes unused textures need to be cached out, which is even slower.

- Transfers: Sending vertices and indices is also slow. Never send anything that does not need to be sent.

- Overdraw: Overdraw occurs when a pixel is fully rendered, only to have another pixel fully rendered on top of it later. The previous pixel was a complete waste of time in this case, and that wasted time can be significant if the pixel shader is complex.

- Redundant render states: This mainly applies to systems that are not entirely shader-based. If you are unfortunate enough to not be using shaders for every render call, you must implement wrappers around all of the render states you use, such as lighting on/off, in order to never set the same state twice. If you are using shaders, you only need a mechanism to avoid applying a shader that is already applied, and the same for textures. Lighting will just be your own boolean switch on the CPU, and will be handled, along with every other render state, inside your shader.

Sorting

The above bottlenecks can be mostly mitigated by sorting the objects that are about to be rendered. Sort by shader, then by texture(s), and then loosely by depth. When it comes to opaque objects, you want to render near-to-far as best as you can without incurring too many shader and texture swaps. When sorting translucent objects, depth is really the only sorting criteria, because far-to-near rendering is necessary to avoid artifacts.

You may need to experiment; if overdraw is not a major bottleneck for you, you can ignore depth sorting (opaque objects) and render all objects that use the same shader, and then within those objects, draw all objects using the same textures together.

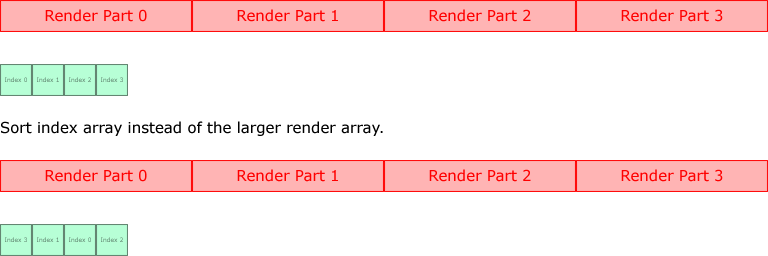

This is called a render queue and it is extremely easy to implement, while being very helpful to your performance. For each object that is in view (perform per-object and per-sub-object culling), for each mesh on that object, for each part of each mesh, submit the data needed for sorting to the render queue. It should be very compact. Specifically, pointer to the object that submitted this data, shader ID, texture ID’s, and distance from the camera (which does not need to be true distance, and can be obtained simply via a dot product of the camera view direction against the position of the mesh’s center point). When sorting, instead of copying and moving these little bits of render values around, build a list of indices into this data and sort those instead. This allows you to move only DWORD’s at a time during the sort.

Run over the sorted indices and render each object in the order defined by the indices. In this example, that would be render parts 3, 1, 0, and then 2. This allows you to heavily reduce the amount of data you copy and move. Rendering is not directly handled by the render queue. The the final render is passed off to the actual object that submitted the render-part data. This allows the object to make any final preparations, activate its shader and textures, and select the index/vertex buffers to use for the render. In other words, the data passed to the render queue is just a reference for the render queue to give a good order for the rendering of the objects, and it will be optimal as long as the objects actually use the shaders and textures they told the render queue they would, but they are not necessarily required to do so.

Attribute-Specific Buffers

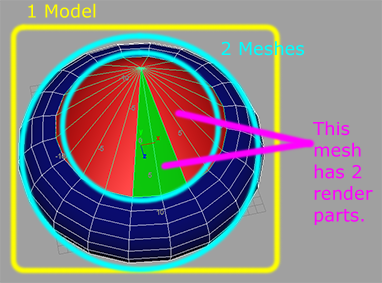

In general, a model has many model parts, or meshes. A robot is a model, but its arm, foot, and head are model parts/meshes. Then, within each of these parts, you will have multiple vertex buffers for each part of those meshes (which I call render parts, for lack of better terminology). If half of the head uses one material and the other half uses another, you need to either make two vertex buffers, or make one vertex buffer but draw it in two passes, the first set of vertices for the left half, and the second set for the right half, changing materials between renders. This is the typical situation.

In this image, there is only one model, but 2 meshes. The blue ring mesh has only one render part, but the cone mesh has faces that don’t all use the same material. We must render the red faces, change materials, and then render the green faces. So this mesh has two render parts/vertex buffers.

For optimization, these vertex buffers should be broken down even further into multiple vertex buffers, using multiple streams together to render each part. For example, store the vertices separately from the normals, texture coordinates, etc. Keep one vertex buffer for vertices, one for normals, binormals, and tangents, and another vertex buffer for the rest, optionally breaking it into even more buffers.

The reason is that transfers to the GPU from the CPU are slow and you never want to send data that is not being used. In a naive approach, with only one vertex buffer for all attributes, normals will be submitted even if lighting is disabled, and sometimes binormals and tangents along with them. By keeping them in their own buffer, it is trivial to avoid submitting them when they are not needed.

Another example is during the creation of shadow maps. You are only creating a depth table, so you don’t need color information at all (except in handling colored glass). If you have the vertices in their own vertex buffer, you can avoid submitting (in an average case) normals and 1 set of texture coordinates per-vertex. That is 20 bytes per vertex. You also don’t have to submit any textures, your shaders can be as simple as humanly possible, and you don’t have to swap shaders between objects during the entire render. This makes the creation of shadow maps almost free.

Depth Pre-Pass

Z-buffering is a way of making sure nearer pixels stay above pixels farther back. Unfortunately this happens after each pixel has been fully processed by the shaders. This is because shaders are able to change the depth value. Some graphics cards are optimized in that they can take a peek into a shader and realize no depth modifications are being made, and may cull pixels early rather than late. Unfortunately, this can’t be expected behavior (though it is growing more common heavily).

If you have followed my above set of advice, you will have vertices in their own vertex buffer. Once again, without texture uploads and shader swapping, and with little data transfer and small shaders, making a depth-only pre-render of the scene is trivial. A depth pre-pass is extremely efficient in this case, and it works by rendering your scene, saving only the depth values. Once the depth buffer is finalized, set your depth comparison to equal and render your scene again.

This is only efficient if the graphics card is performing early-outs on depth, before the shader is executed. If the card is performing the depth check afterwards, as older cards did, you have only added a bit of extra work to your load. The only way to know which cards are optimized is to simply research which cards are known to be optimized and keep a table of this information, as far as I know.

Shader Permutations vs. Branching

The speed of branching in shaders depends on the hardware and the API. In DirectX 9, true branching can only be done on boolean registers which can’t be modified during execution of the shader. OpenGL makes no guarantees that any true branching will take place. By “true” branching, I am referring to the opposite of the alternative, which is when both sides of the branch are taken and the result is interpolated between them to effectively make one side or the other non-contributory to the result. This is extremely slow.

On the other hand, as pointed out earlier, switching shaders is expensive, particularly on older API’s. You have to consider whether it is less costly to incur the wrath of a dynamic branch, with both sides of the if/else being executed, or to incur the wrath of a shader swap that has a more efficient execution time. As a rule of thumb, if the same shader can be executed for many objects, it is best to use permutation. Permutation is most easily accomplished by writing one shader per rendering class (generating shadow maps is one class of shaders, rendering ambient-only is one class, multi-pass lighting is a class, etc.) using #ifdef to omit or activate chunks of code.

Lighting is one case where it is better to use permutation rather than to branch. In a typical scene, lighting being on or off is something that will effect every object in the scene, so it is unlikely to incur more shader swaps than if lighting was on. Even if lighting is being switched on and off, during a single render, all objects will use only the lighting shaders or the no-lighting shaders.

Exit Early/Work Reduction

Exiting early is not guaranteed to actually work, since some implementations may encounter a discard/return pair but still execute the rest of the shader. We can’t get around this, but when early exits are correctly supported, they can prove a huge help. Here are a few things that can provide early exits or workload reductions:

- Black Lambertian terms: A diffuse material with a 0 specular term has no need for lighting calculations. Once lighting is multiplied by the diffuse term, the result is still 0, and no specular will be added to change that. There is no need to handle lighting at all in this case. By using multiple vertex buffers as mentioned above, you also don’t have to send normals, tangents, and binormals.

- Fully fogged: If a pixel is outside the fog’s far reach, simply return the fog color and exit early.

- Alpha testing: If the alpha is 0, or below the set threshold, exit early before any other computations are done. This implies that the pixel’s alpha is one of the first things you should compute, before lighting, etc.

More to come as I have time to write.

L. Spiro

awesome blog, do you have twitter or facebook? i will bookmark this page thanks.

My blog:

[link removed; this is not a link to a blog]

I do not, however if you want to ask questions, start discussions, etc., you can join the forums at: http://lspiroengine.com/forums/

L. Spiro

Nice post L. Spiro (bookmarked your blog).

I’m already doing most of these optimizations except that sorting indices instead of render parts.

About depth pre-pass, in shader model 5 you can force early depth testing by adding [earlydepthstencil] before your pixel shader (check DX documentation for more details).

I haven’t yet had a chance to look much at Direct3D 11, though I will be supporting it. It is good to know that they have finally added a way to force that. I was really wondering why it was not added much much sooner.

I recommend sorting the indices. While there is a bit of overhead in creating the index table at the start, it is more-than mitigated by the decrease in data-copy overhead. Additionally, you can optimize the creation of the index table so that it can be either skipped entirely (if the number of elements was the same as last render) or reduced to only adding a few more indices (when the number of elements increases).

L. Spiro

Hi L. Spiro,

Very nice article. It’s kind of you )

)

1/ About the sorting, nowadays, what do you think of BSP tree as an alternative for rendering back-to-front translucent polygons? Is it worth implementing it? (or will depth-sorting be enough?)

2/ Please have look at the section “How to sort” in this link:

http://www.opengl.org/wiki/Transparency_Sorting

You will see that if you sort by center-point to camera distance, you could be causing artifacts. BSP sorting solves this though.

So is BSP a better substitute for depth sorting?

Many thanks in advance,

Jean.

Hello and thank you.

I think it depends on what you mean by “better”. BSP trees can deliver a more accurate result at the cost of speed. On small, non-complex objects this may be fine, but may slow down a great deal on larger objects.

Additionally, BSP trees do not work so well with dynamic objects, and even if two objects are non-deforming but still moving, they can’t both be put into one BSP tree. You would need one for each, and still need to think of a way to decide which to draw first, and still may get artifacts.

On the other hand, regular sorting can cause more artifacts, but it is a lot faster.

#1: You of course only need to sort the index buffer, not the actual triangles inside the vertex buffer.

#2: A bubble sort produces extremely fast results due to frame coherency. Triangles do not change order very often after being sorted, so from frame-to-frame most of the sort involves simply running once over the index buffer start-to-finish and maybe swapping one or two indices.

Still, picking a good center point for testing for distance is not a solved problem, and artifacts will be inevitable.

As for me, I will not be implementing BSP trees for sorting purposes, and will accept artifacts in exchange for performance.

If in the future a client really needs more accurate transparency, I would implement it then.

L. Spiro

L. Spiro,

First sorry, for my late comeback (I almost forgot that I left a question here )

)

Yes I like your answer as it clearly shows me what I didn’t really understand (trade-off accuracy for performance). That makes a huge amount of sense.

From what you’ve said, it appears that I do not have a compulsory requirement for BSP use. I think I will go for performance as well (I will however allow an ‘accuracy’ setting to be turned on/off by the application user – this way I could then switch to BSPs internally). My app uses small objects with transparent openings like some kind of window, so I can afford to use BSP. These will not be used for dynamic objects, just static.

sorry to bother you with this last question:

Would you please elaborate a bit more on your #1 – the index buffer sorting? Do you mean that, AFTER SORTING IT, this should contain all indices in ascending order?

Many thanks again for your help,

(I’ll try and read your response earlier – say tomorrow)

Cheers,

Jean

The index buffer is not sorted such that indices are compared against each other. I mean that each triplet of indices represents a triangle in the vertex buffer, and should be sorted as if they are the actual triangle. In this way, for each triangle, you move 3 integers (which may even be 16-bit in some cases) instead of 9 floats for the vertex, 9 floats for the normals, 6 floats for the UV’s, etc.

Furthermore, don’t calculate the distance away from the camera in the sort routine itself. This will cause you to calculate the distances redundantly. Start by building a table of distances, one for each triangle. Then perform the sort using just that table.

L. Spiro

Oooh I see! haha! Very nice! That makes a simpleton like me very happy

Ok it makes perfect sense. I’ll go away and do that.

God bless you – and stay in touch!

Jean

You mention that keeping vertex attributes in separate buffers is preferred, especially during a depth-only rendering pass. It would also be nice for asynchronous CPU skinning.

However, I’ve read that using interleaved arrays are faster for GPU’s when rendering (I assume better caching). With this in mind, do the tradeoffs of non-interleaved arrays outweigh the other?

Interleaved arrays are a bit faster, and also have the advantage of typically wasting less space. That is, if you put the vertices in their own buffer and you pad buffers to 32 bytes, you have wasted 20 bytes (however my tests show that it is still faster to pad even a 12-byte element out to 32 bytes).

My tests also showed no improvement in performance by padding vertex elements to 32 bytes in OpenGL, though this could depend on the drivers or other implementation factors.

Because of this, there is not a single correct answer, but here are a few considerations that could guide you in the right direction:

#1: In DirectX, 32-byte padding helps a lot, but it complicates the “equation” for optimal buffer layouts. Consider a buffer containing 12 bytes for vertices, 12 bytes for normals, and 8 bytes for UV’s. This is exactly 32 bytes. If you were to split this into 2 buffers, both padded, you would be sending twice as much as you need to send for normal rendering, and the same amount for shadow rendering.

#2: But once you add anything more to the buffer, your normal renders would pad out to 64 bytes. A second set of UV’s, a bitangent and binormal, etc. If you add 12 bytes or fewer, the solution is clear: Remove vertices (-12 bytes from original buffer, puts it back under 32 bytes) and keep 2 buffers. In a normal render you send the same amount (64 bytes total), and in a shadow render you send half as much. It offsets the cost of using multiple streams.

#3: But after you add more than 12 bytes your main buffer will still be 64 bytes even after you extract the vertices. Now you need to consider clever strategies in order to get the first buffer back below 32 bytes, such as moving your UV’s over to the second buffer, or perhaps your normals. If you can find such a strategy, then again you win.

#4: And then there is the OpenGL side. On 3 implementations I tried, between ATI and NVidia, I noticed no gain by padding the buffers to 32 bytes. Because padding is no longer an issue, it is very basic. Keeping separate buffers always wins. The minimal transfer during the depth pass is almost always enough to offset the overhead in using multiple streams.

In 3 out of 4 cases it is a winning strategy to keep multiple buffers, although the 1 losing strategy is also the most common situation.

And remember this does not just apply to vertices. During the ambient pass you can omit normals (this is why, in case #3, I would suggest trying to gt buffer #1 down visa transferring UV’s first), and can be a huge savings if you have binormal and bitangent data along with regular normals as well. That in itself is over 32 bytes.

When trying to think of the most optimal of all cases, it gets very complicated. But I hope this has given you what you need to consider optimizing your own buffers.

L. Spiro

My experience in OpenGL was that padding to 4 byte alignment helped, I had a vertex size of 6 bytes and upping it to 8 bytes(even though I didn’t need the extra space) made the whole thing run faster.

My guess is that D3D is similar, but I can’t be wasting 32 bytes per vertex for something that only needs 6(I already use 100′s of megs) so I haven’t tried 32..

Hey Spiro..probably dumb question..

What I get from your post is that vertex and index buffers are left out of your render queue, is that right?

“The render queue applies the textures and shaders, etc., but the final render is passed off to the actual object that submitted the render-part data. This allows the object to make any final preparations and select the index/vertex buffers to use for the render.”

How do you handle “VB state changes” than? This have to do with the fact that 2 different objects are not likely to share the same VB?

Im imagining how it works for a multiple pass object to be rendered, you set the render queue with the commands(prepare shaders, textures, etc), but how do you set that pass 1 uses only VB 1 (say pos), and pass 2 uses VB 1, VB 2(normals) and VB 3(UV), for example? Why not on the render queue also? Am I misunderstanding everything?

Yes, they are left out of the render queue and activated by the objects themselves when it is time to draw. Actually the objects also activate their own textures and shaders, so if I said otherwise in this article then I will need to correct that (done) and apologize for the confusion. That was my old system for my old engine, but the new engine has the objects themselves activating each texture and shader, and this is much better.

There are several reasons for not including the vertex/index buffer ID’s in the render-queue items.

#1: It is important to keep the render-queue items very simple because the more things you have to compare in order to sort the items and the more you have to copy to create the render-queue items the slower your performance.

#2: So with that mind we want to eliminate excess data. There can be up to 16 vertex buffers activate at a time, but since it will almost always be just 1 or 2 at a time, it is wasteful to add a 16-ID array to the render-queue items.

#3: There can also be up to 16 textures (or 8 on some platforms etc.), but it makes more sense to include these because they are very important to make non-redundant and there are often many more textures active rather than just 1 or 2 (less waste).

#4: And this kills 2 birds with one stone. In the most frequent case where the same index/vertex buffers are shared, those objects also have the same textures and shaders, which means they will be drawn sequentially implicitly. The cases where the same vertex buffer is shared but not with the same textures/shaders is so rare that you are unlikely to benefit from trying to add this as an explicit sorting criterion.

For your last question, you can blame my mistake of saying that the render queue will activate textures etc. by itself. To do multiple passes, just submit 2 render-queue items to the render queue with different pass numbers set and whatever shaders/textures those passes need. When sorting, you of course must sort by pass first except in the case of transparency where depth always comes first, and then pass. Your object will be told to draw twice and the pass number will be one of those parameters, so it can know what buffers/textures/shaders it needs to activate.

L. Spiro