Overview

There are many topics covering the concept behind fixing your time step. Last time I read them was many years ago and having just re-read them I just realized they already cover about 50% of what I planned to cover, but while they do give implementations and explain certain concepts fairly well, people still make faulty implementations or miss certain nuances that make a big difference between success and failure.

For thorough reading, check here:

http://gafferongames.com/game-physics/fix-your-timestep/

http://www.koonsolo.com/news/dewitters-gameloop/

Since the concept is already well documented I am going to try to address issues I have personally seen with people who have improperly implemented it or simply misunderstood the concept behind it (which is key to understanding why it is the standard way to implement a game loop and to implementing it properly).

Brief Overview

I will provide a brief overview of the technique for completeness and because getting straight to the main point might make things clearer to those who don’t want to do a lot of heavy reading.

The basic idea is that you want to render as often as you possibly can but only update logic (handling input, running AI, performing physics, anything other than rendering) at a fixed interval. This is the only way to create a stable physics simulation and in general a reliable game-state simulation, which is necessary for not only a consistent gameplay experience but also networking.

If you have played games that sometimes encounter a little lag in the frames-per-second department, you might see the same image on your screen for, for example, 2 seconds. When you get out of it you see the new state of the game after 2 seconds have passed, and it looks the same as if you had 60 frames-per-second for 2 seconds. It is not what you do see that needs explanation, it is what you don’t see.

Let’s say the lag was caused by a vehicle exploding in front of you. A lot of smoke and fire and particles were thrown onto your screen. What you don’t see is all of those particles in one spot 2 seconds later. You see an evenly distributed flow of smoke starting from the vehicle and rising 2 seconds high into the air. This is because they were not all just added on one frame that lasted 2 seconds. A simulation was secretly running over and over for 2 seconds, allowing the smoke to be added and to rise the same way as it normally would, regardless of how many times it was drawn to the screen.

Another example would be a boulder rolling down a hill with the ability to crush players and bounce off walls. If the game were to lag for 2 seconds and then resume, you might see the boulder further down the hill and one player crushed. But in order to get to its new position it had to bounce off 3 walls and a player’s head. There is no way it could calculate all of that just by going from the last frame 2 seconds to the new frame. It had to simulate the game state in increments at a fixed interval, which happens whether the screen is being updated or not.

But Isn’t That a Waste?

So if you understand that the simulation is only updating, say, 30 times per second, and that update is what causes things to move, then you might be wondering why you would ever render faster than that, since you would be rendering the same image over and over until the next logical update and things moved. The articles to which I linked both touch on this in their “interpolation” sections, but it might not be so clear for some that that was meant to address this specific issue.

In L. Spiro Engine I keep 4 matrices. Local, world, last world, and render. Local is needed to determine the world matrix obviously, and we all know the purpose of a world matrix. So what is the “last world” and “render”? In order to keep things appearing to be in motion between the logical updates we need to use interpolation, and for that we need to keep track of the last world matrix we were using. Note that it is not necessary to remember the whole matrix; you could store the position, rotation, and scales independently and interpolate between those instead.

Either way, the world and last world are updated only during logical ticks, while render is updated every frame by interpolating between those. This allows things to appear to be moving smoothly at any frame-rate even though they are really only changing positions 30 times per second.

But How Can That Work? Don’t I Need to Know its Next Position, Not its Last Position?

You may not have been aware of it, but every major title you have played is lagging behind graphically by a certain small amount. For this article I am sticking to 30 updates per second, so that would be 33.3 milliseconds of graphical lag.

You can’t know the next position. You have to interpolate from things’ last positions to their current ones.

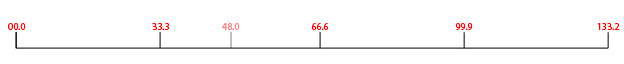

That means if my actual time stamp is 33.3 milliseconds into the game, I have just updated the player’s position and kept a copy of his or her last position, and am starting the interpolation between them. The amount of interpolation depends on how soon we know the next update will be, and in this case it will be another 33.3 milliseconds before that happens. So let’s use a few examples to demonstrate this idea.

Current time is 33.3. Next update will be at 66.6. In increments of 33.3 milliseconds, how close are we to 66.6? 0%.

Current time is 48.0. Next update will be at 66.6 (because updates happen every 33.3 milliseconds). In increments of 33.3 milliseconds, how close are we to 66.6? 44.1%. Why? Because the last logical update was at 33.3 and we are 14.7 millisecond past that.

So we have our current world matrix, from t = 33.3, and our last, which was at t = 0.0. We don’t have the matrix from t = 66.6 because we are not there yet. But we are 44.1% of the way there.

By interpolating between the last world and the world matrices by 44.1%, the result is equivalent to t = 14.7. What if we were at t = 110? Then we would be showing t = 76.7 to the player.

A very simple pattern has emerged. Whatever the fixed time step we are using is exactly the amount of visual delay there is. In our case, no matter the current time, we are showing the player how the game state appeared 33.3 milliseconds ago.

If this comes off as being unacceptable, you should be aware that every professional game you are playing is doing this. Yes even those fast-paced twitch shooters that rely heavily on reaction speed. You can’t detect it and no one playing your games will be able to detect it.

Implement with Care

Many people do realize that using a fixed time step is necessary but miss a few nuances. These were covered in the links above but I will reiterate for clarity since I have recently had personal experience with people who understood the idea of using a fixed time step but not the nuances.

Some people think a timer or sleep or even a while loop can be used to simply control the frame rate, and assuming that control is reliable they can simply pass a fixed update time each time the timer trigger, sleep ends, or while loop iterates. This will never get correct results. The thread scheduler is free to delay your game thread if another thread is busy. Your timer will never fire at exactly the time you give it; it will always be delayed by some nanoseconds. Sleep() has the same problem, and a while loop may be more reliable but still will always be off by some tiny amount.

There is no such thing as a truly perfect time-counter in the world of computers, since by the time you request the current time, some time has already passed (to mention nothing of the fact that computers don’t update time in real time, but only as often as their interrupts allow). But accumulating time in the above manners is simply compounding the amount of errors introduced by the world of computers.

By basing your logical updates on the actual amount of time that has passed as well as a computer can tell you, you can only be off by that amount of error no matter how much time has passed. It doesn’t accumulate, and that is important.

That is one key point to remember, but even this has a nuance. Request the current time once and only once per frame. Then store it and use that as a reference for everything else you want to do. This is not about performance, although that is one consideration (polling the current time is slow on some CPU’s). This is about consistency. Consider the extreme opposite in which you request the current time every time you need it. Suddenly one of your objects is falling faster than the rest simply because the time value it got was higher, so it considered that more time had passed since its last update, and thus it fell further. Note that this is to be followed strictly as far as “what happens inside the game simulation”, but don’t read my bolded text blindly; you are free to request the current time as often as necessary on other threads, such as the input thread or sound thread if you want to time-stamp certain events (and you should).

Another nuance is storage. Never store raw time values in floats or even doubles. Store them as 64-bit unsigned integers. Floats and doubles can be used for delta values—time since the last frame or update, because these values are small.

Updating your timers has its own nuances. Be careful of overflow. To avoid it, you should always start your own game’s time at 0 and just increment from there. Your game timer will never overflow even if you use microseconds (and you should), but the system timer will overflow fairly frequently. Use something like the following to avoid troubles.

/**

* Update the time.

*

* \param _bUpdateVirtuals If true, virtual values are updated as well.

*/

LSVOID LSE_CALL CTime::Update( LSBOOL _bUpdateVirtuals ) {

LSUINT64 ui64TimeNow = GetRealTime();

// Wrapping handled implicitly.

LSUINT64 ui64Dif = ui64TimeNow - m_ui64LastRealTime;

m_ui64LastRealTime = ui64TimeNow;

UpdateBy( ui64Dif, _bUpdateVirtuals );

}

Virtual time helps with pausing the game, and is a topic for another day.

Then there is the nuance associated with keeping track of when you need to perform another logical update. The articles above explain it correctly but I have still recently seen an implementation of it that was naive and the cause for a ton of slowdown. You might be tempted to do something like the following:

LSUINT64 ui64CurTime = tTimer.GetCurMicros();

if ( ui64CurTime - ui64LastTime > 33333ULL ) {

Update( 33333ULL );

ui64LastTime = ui64CurTime;

}

This will never produce the correct result. In my previous example I suggested that our current time was 48.0. If the last time was 0 we would indeed have done one update here, but the next update would come at t = 81.3, not t = 66.6. Furthermore, if the last time was 0 and the new time was 78, we would have incorrectly performed only 1 update when 2 were necessary.

The proper implementation is as follows:

LSUINT64 ui64CurTime = tTimer.GetCurMicros();

while ( ui64CurTime - ui64UpdatedTime > 33333ULL ) {

Update( 33333ULL );

ui64UpdatedTime += 33333ULL;

}

Now we will update as many times as is necessary to catch the game simulation up to the current time, and we will only update on regular intervals of 33.333 milliseconds.

Conclusion

There are a lot of little nuances of which to be aware when fixing your time step. Here I wanted to focus less on the concept itself, which is already very well documented, and more on the nuances and misunderstandings that commonly bite back at people trying to implement a stable game-state simulation. I hope this has made the inner workings a bit clearer and helped some people to be less hesitant in implementing it in their own products.

L. Spiro

Good article. Just one thing; the “handle wrapping gracefully” is unnecessary in unsigned integer arithmetics. A – B will return the correct difference regardless of if A is less than B or not.

You are right. I was so concerned with the concept that I didn’t think about the details of computer science. I would leave the code as it is, but your way is faster, so it is worth the change.

L. Spiro

Thanks for the article. You have a great wealth of good information on your website. I have a few questions regarding the fixed time step.

You mention that you should only query the time once and only once per frame. This makes sense for the most part, but wouldn’t it be appropriate to query the time again for each logical update?

Consider the following:

while ( tTimer.GetCurMicros() – ui64UpdatedTime > 33333ULL ) {

Update( 33333ULL );

ui64UpdatedTime += 33333ULL;

}

The reason is that ‘Update()’ takes a certain amount of time to execute and that elapsed time should be taken into consideration when trying to catch up to the current time.

If that isn’t appropriate, what about when performing updates of entities at different rates.

LSUINT64 ui64CurTime;

//update objects at 30hz

ui64CurTime = tTimer.GetCurMicros();

while ( ui64CurTime – ui64UpdatedTime30z > 33333ULL ) {

//update any objects that require 30hz

Update( 33333ULL );

ui64UpdatedTime30hz += 33333ULL;

}

//update some other objects at 2hz

//query the time again because x microseconds

//have passed since the previous request

ui64CurTime = tTimer.GetCurMicros();

while ( ui64CurTime – ui64UpdatedTime2hz > 500000ULL ) {

//update any objects that require 2hz

Update( 500000ULL );

ui64UpdatedTime2z += 500000ULL;

}

I guess the thing I want to avoid is ignoring the execution time of updates that happen prior (or during) my logical update loops.

What are your thoughts? Thanks.

In both cases the basic answer is the same: Objects that have “fallen behind” will “catch up” on the next tick.

Yes logical updates take a certain amount of time but querying the time again would actually cause that time to be lost, not gained. Querying the timer once per frame is vital to ensure no time is lost; you need to know exactly how much time has passed since the last frame in order to properly “catch up” objects as needed. This itself is how to account for “time spent performing logical updates”.

For the second case, it is true that certain objects will want to be updated less often. But when they do, they should basically handle it the same way. They are absolutely forbidden to query time directly but they can accumulate time each time they are told to tick, and using the same type of while loop (while ( ui64CurTime – ui64UpdatedTime2hz > 500000ULL ) …) they can perform the rest of their updating once per 0.5 seconds.

In my example the objects perform updating every logical tick only accumulating time, then performing a full update when enough time has accumulated. As an optimization it is possible to keep those objects in a separate list which is then only updated by the scene manager once every X seconds.

L. Spiro

After thinking about it some more I think I understand better how requesting the time more than once can cause problems.

It seems that if you request the time more than once, objects can easily become out of sync. I’ve re-read the section where you explain how one object could be falling faster than another because it thinks more time has passed, and it makes much more sense now.

If I keep my entire game simulation on a single thread, I will query the timer once and only once per frame.

What about a multi-threaded approach? (on PC, will my threads automatically be distributed amongst different cores by the Windows scheduler?) Should each thread query the time before doing their fixed-time updates? I’m assuming the answer is yes.

Thanks

Basically, each object should be updated by 1 and only 1 timer, and that timer should only be updated 1 time per logical cycle. A logical cycle is of course whatever constitutes a cycle of work for whatever system is running. That could be a single game loop iteration or an update of the sound thread, etc.

You can distribute your timers as you please as long as you keep to this idea. Usually you will have 1 timer per thread when it comes to isolated systems such as sound. The entire system runs on its own thread, and should have its own timer.

Distributed systems on the other hand are just a way of helping another system by sending work to other cores/threads. Like the game loop sending some physics to another thread. The work is still part of the main game system, and there is no need for more timers there.

L. Spiro

Nice article, thanks. Keep up the good work

I was wondering if there is any problem to do something like that?

ui64CurTime = tTimer.GetCurMicros();

ui64ElapsTime = (ui64CurTime % 33333ULL) * 33333ULL;

Update( ui64ElapsTime );

ui64UpdatedTime30hz += ui64ElapsTime;

I also read this.

http://stackoverflow.com/questions/48053/is-there-an-alternative-to-using-modulus-in-c-c

Hello Mr. Evil Drouet and thank you.

I believe you want to do:

ui64ElapsTime = (ui64CurTime / 33333ULL) * 33333ULL;

The problem with this is that it only updates one time when multiple updates would have been necessary. Instead of 2 updates at 33333ULL each, you make 1 update at 66666ULL. The amount of time you accumulate remains correct, but you no longer have a fixed time step. The articles in my link explain in more detail why a fixed time step is critical, but the main point is in simulation stability. Physics engines simply can’t run properly with variable time steps. A ball rests on a surface. Apply gravity for 33333ULL microseconds, the ball sinks a bit into the table, but is gently pushed back up, literally via a small bounce that you can’t see.

Same situation, but gravity is applied for 66666ULL microseconds. The ball “hits” the table (we consider it was already at rest, but all that means to a physics simulation is a small “hit” every frame) a lot harder, and in resolving the collision the ball ends up bouncing into the air. If your simulation takes too long, and a stack of balls is updating by, say, 1 update of 1.5 whole seconds, the simulation basically explodes, and you will see all the balls jump into the air for no apparent reason. This is why updating 2 times at 33333ULL is not the same as updating 1 time at 66666ULL.

L. Spiro

I see, thank you for the explanation

Great article! With 2 world matrices per object, how do you solve the problem of them changing during a render draw i.e. after it’s calculated the amount to interpolate by, but before the render thread has drawn them? Do you double buffer them?

I’m getting quite a bit of jerky movement when my tick and draw threads run in such a way that the amount to interpolate is around 0.9-1.0, and an object changes it’s data before the renderer draws it. I’m wondering if I’m missing something and this can be solved without storing 2 additional matrices.

With standard multi-threaded rendering there already has to be some kind of synchronization point in place where the data to be drawn gets copied into a command buffer that will later be processed by the render thread. You should be passing off a copy of the world matrix to that already. Adding fixed time-steps and interpolating between 2 matrices shouldn’t impact that system at all—it only changes which matrix you pass off to the command buffer. All matrices involved should be handled on the same (main) thread.

So it should be looking like this:

Main thread updates logic on all objects. Matrices are updated.

Main thread draws all objects. Matrices are interpolated here and all objects send render commands to a custom render command buffer.

Main thread draws all objects. Matrices are interpolated here and all objects send render commands to a custom render command buffer.

Main thread draws all objects. Matrices are interpolated here and all objects send render commands to a custom render command buffer.

Main thread updates logic on all objects. Matrices are updated.

Meanwhile:

Render thread eats commands from the custom render command buffer in order and sends them to Direct3D/OpenGL.

Render thread eats commands from the custom render command buffer in order and sends them to Direct3D/OpenGL.

Render thread eats commands from the custom render command buffer in order and sends them to Direct3D/OpenGL.

So if you have the kind of problem you are describing it is a sign that your work isn’t being distributed between the 2 threads correctly, at least not “correct” by normal multi-threaded rendering systems. Some systems, such as the one at our office, actually have yet another thread to handle pre-rendering things (which is where interpolating between 2 matrices would go if we did that at work), but we are targeting very specific hardware and a very specific class of games.

If you deviated from the standard multi-threaded setup, make sure your reasoning is sound, because the normal setup is the normal setup because it handles most cases well and avoids a lot of unhappy issues.

If you deviated intentionally and with a good reason, I would need to know more about your setup to give better advice, but double-buffering would likely be the correct answer.

If you deviated accidentally, this would be a good chance to look over your implementation and see if you’ve made a mistake somewhere etc.

L. Spiro

I definitely deviated accidentally as I didn’t realise that is the standard way of doing things with a multithreaded engine, I thought the render thread would directly access the draw method of the game, access the objects and interpolate their properties upon drawing. Conceptually this makes more sense to me that the threads would be as separate as possible and take ownership of the job they’ve been given, so that no spikes in any processing affect the other thread. I will probably change it to the way you’ve mentioned if that’s the normal way of doing things but before I do I have a couple of concerns:

Say my logic thread is running at 30hz and each tick takes most of that time to do it’s processing, let’s say 30ms out of that 33.3ms. Then for those 30ms there would be no commands being sent to the renderer – wouldn’t this lead to frame-rate loss and also inconsistent timing between render frames? This would lead to the render rate being somehow tied to the the tick rate no?

Decoupling rendering from logic (which is indirectly the subject of this article) and multi-threaded rendering are often confused with each other and when combining them it is sometimes hard to see exactly where each part of each system belongs.

Basically multi-threaded rendering occurs at a very low level such that it transparent to everything discussed in this article on fixing your time-step. That is, everything discussed here is completely the same whether using single-threaded or multi-threaded rendering; this level of the system has no idea which rendering type of being used, just as it doesn’t know if it is rendering with OpenGL or Direct3D. Multi-threaded rendering takes place at the lowest level, meaning the actual issues to draw calls.

That means you can still think about logic and rendering being decoupled as explained in this article, which means Tick() is called every 30 milliseconds while Draw() is called every frame (read http://lspiroengine.com/?p=351).

The call to Draw() issues a bunch of draw calls, but it does so through wrapper functions. This is not only necessary for cross-platform functionality but also for multi-threaded rendering.

Basically the only difference is that these wrappers call OpenGL/Direct3D functions directly if in single-threaded mode, or they put commands into a custom command buffer in multi-threaded mode.

So you won’t have any problems related to your concerns. You will still fill a command buffer to be rendered by the render thread every frame, not only every 30 milliseconds.

L. Spiro

I’m still having a bit of trouble understanding, sorry! Ok so the main thread calls tick(), then calls draw() as many times as it can before the next tick(). draw() creates interpolated matrices for objects, creating and pushing render commands and attaching the created matrix with each command. The render commands themselves will be processed and sent to OpenGL/DX on a separate render thread as quick as possible, but they will still only be created and sent to the render command buffer in between ticks. Assuming that in a heavy simulation the tick takes 30ms to complete, and for the sake of argument that draw() takes 1ms and the render thread takes 1ms to process commands, would the following not happen:

Time Main thread Render thread

0 tick 1 begins nothing to draw

. . nothing to draw

. . nothing to draw

30 tick 1 ends nothing to draw

31 draw 1 nothing to draw

32 draw 2 processes frame 1

33 tick 2 begins processes frame 2

. . nothing to draw

. . nothing to draw

63 tick 2 ends nothing to draw

64 draw 3 nothing to draw

65 draw 4 processes frame 3

66 tick 3 begins processes frame 4

creating uneven frame times and not drawing when it could? Whereas if we call draw() on the render thread and process the commands as we go, there will probably be some locking between threads whilst trying to get the latest matrices but in general it would look like this wouldn’t it:

Time Main thread Render thread

0 tick 1 begins draw/process 1

. . draw/process 1

. . draw/process 2

30 tick 1 ends draw/process 2

31 draw 1 draw/process 3

32 draw 2 draw/process 3

33 tick 2 begins draw/process 4

. . draw/process 4

. . draw/process 5

63 tick 2 ends draw/process 5

64 draw 3 draw/process 6

65 draw 4 draw/process 6

66 tick 3 begins draw/process 7

Your proposed method of multi-threaded rendering is not solving any problems. If your Tick() takes too long, slower frame-rates are the correct response. In other words, you have something to fix somewhere else. Keeping render speeds up by doing rendering at such a high level has no meaning (the renders are fake and inconsistent) and involves a huge cost in lock/unlock overhead. It is in fact much slower than single-threaded rendering, probably, and definitely slower than standard multi-thread rendering. In fact if you did get such a slow Tick(), one reason would be because of all the synchronization between the render and logic threads. Your render thread would literally be slowing down your logic thread, and this is an error.

Also, you can’t be half-done with your logical update and try to draw anyway. It’s not consistent. During that time, perhaps the physics engine has temporarily put the character a centimeter into the ground while working on intermediate calculations. The player doesn’t need to nor want to see that. Of course I am sure you have set up a system of locks and temporary render copies so that that would not happen on the renderer side, but you will still get some form of inconsistent results no matter what, and the locks and copies are slowing down your logical update more than the multi-threaded layout is helping, resulting in overall poorer performance.

L. Spiro

Hmm I see, I haven’t done any tests or anything to see how performance is affected by doing it that way but you’re right, it would involve a lot of locking and unlocking and end up slowing things down rather than speeding things up. I’m going to take your advice and go with the standard multithreaded route, this is probably one of those times to listen to the person who has years of industry experience I may experiment with the other way just for my own learning, but I’ll be going with your advice now. Thanks for the help!

I may experiment with the other way just for my own learning, but I’ll be going with your advice now. Thanks for the help!

LARGE_INTEGER frequency;

QueryPerformanceFrequency( &frequency );

LARGE_INTEGER updateTime;

QueryPerformanceCounter( &updateTime );

//puts updateTime in ms

updateTime.QuadPart /= frequency.QuadPart * 1000;

LARGE_INTEGER currentTime;

while ( running )

{

QueryPerformanceCounter( ¤tTime );

//puts currentTime in ms

currentTime.QuadPart /= frequency.QuadPart * 1000;

while ( currentTime.QuadPart – updateTime.QuadPart > 33 )

{

Update( 33.0f / 1000.0f );

updateTime += 33;

}

interpolation = …;

Draw( interpolation );

}

Is this a good loop?

You have 2 main problems:

#1: Milliseconds are too slow for game timing. Always use microseconds.

#2: You are passing a floating-point value to Update() which makes it impossible for objects in your scene to accumulate time accurately.

The solution is to make a CTime class that handles all of the calls to QueryPerformanceCounter() and friend and updates its time once per frame, stores the current microseconds, microseconds since last update, and the floating-point time since last update, and gets passed to Update(). Then objects are free to access the time values they need and your code for handling QueryPerformanceCounter() ect. can be moved away from your main loop, making it cleaner.

The only caveat is that the CTime class needs 2 forms of updating and you need 2 instances of it:

The #1 CTime instance A calls CTime::Update() at the start of each frame to determine how much time has passed since the last frame. This is exactly the same as you have now. This is used to calculate how many times you call your own Update() function.

The #2 CTime instance B gets its UpdateBy() method called each time the logical update loop is run. It is updated by 33,333 microseconds each time. And this is the timer you pass to Update().

L. Spiro

does this look any better?

http://pastebin.com/HvGRwVpg

class Timer//works in microseconds

{

public:

Timer()

{

QueryPerformanceFrequency( &frequency );

}

void SetUpdateRate( unsigned int updatesPerSecond )

{

timePerUpdate = 1000000 / updatesPerSecond;

}

void OnStateChange()

{

QueryPerformanceCounter( &updateTime );

updateTime.QuadPart /= frequency.QuadPart / 1000000;

}

void OnFrameStart()

{

QueryPerformanceCounter( ¤tTime );

currentTime.QuadPart /= frequency.QuadPart / 1000000;

}

bool Update()

{

if ( currentTime.QuadPart – updateTime.QuadPart > timePerUpdate )

{

updateTime.QuadPart += timePerUpdate;

return true;

}

return false;

}

LONGLONG GetTimeStep()

{

return timePerUpdate;

}

float GetInterpolationFactor()

{

return ( float )( currentTime.QuadPart – updateTime.QuadPart ) / timePerUpdate;

}

private:

LARGE_INTEGER frequency;

LONGLONG timePerUpdate;

LARGE_INTEGER updateTime, currentTime;

};

better?

There is room for improvement, but frankly you can’t expect to write a perfect timer on your first, second, or even third try. Knowing what it needs to do and how to make it do that just comes with experience.

I haven’t tested the validity of the code but I leave that up to you.

One thing you should add is the ability to get the amount of time that has passed since the last update in microseconds as a u64 type, not only as a floating-point type.

When objects need to accumulate time, they need the u64 value. Time should not be accumulated in floating-point formats.

L. Spiro

Hey Spiro,

How does the Windows message loop would integrates with the game loop? You just have it after while(peekmessage) ?

How does all that integrates with a game engine?(how all that is hide to the user of the engine). A post about that would be cool (engine + message loop + input + game loop), if theres enough to discuss(not even considering multi-platform)..and if you`re in the mood of course ;D.

This will be the subject of my next article, though I have some higher-priority items to knock off from other things first.

L. Spiro

hey could you just explain how i can reduce the cpu usage. Does the loop do it by itself because it only updates a fixed number of times and the draw calls block? or do i need to add sleeps or something to get the cpu usage down. or do i even need to worry about it. this is for an fullscreen game running on a desktop. i’d think the cpu being at 100% wouldn’t matter but maybe it does if it damages the hardware, overheats or something

Games typically do not want to reduce CPU usage. It is often the opposite—you want to maximize CPU usage. But that does not mean any threads should be using any CPU if they have not been assigned a task to perform. Most threads should be in a waiting state until given a task. On Windows this would be done with WaitForSingleObject(). When something gives them a task it can signal them to come back to the real world with SetEvent(), which point they should execute that task and wait for the next signal.

Strategies on how to distribute tasks wisely across the available cores is a deeper discussion, but there are many lengthy articles on it.

http://software.intel.com/en-us/articles/designing-the-framework-of-a-parallel-game-engine is one.

Another simpler way that is fairly effective is to force v-sync, but users can override this in their control panels so it is not guaranteed to do anything.

L. Spiro

What GetRealTime() returns in your implementation?

/**(&ui64Ret) ) ) { return ui64Ret; } * Gets the real system time. Not to be used for any other purpose besides random-number

* seeding.

*

* \return Returns the real system time in system tick units.

*/

LSUINT64 LSE_CALL CTime::GetRealTime() const {

#if defined( LSE_WINDOWS )

if ( m_bHiRes ) {

LSUINT64 ui64Ret;

if ( ::QueryPerformanceCounter( reinterpret_cast

}

return ::clock();

#elif defined( LSSTD_TIME_MAC )

return ::mach_absolute_time();

#else

#error "No implementation for CTime on this platform."

#endif // #if defined( LSE_WINDOWS )

}

L. Spiro

Thanks. Right now i am trying to get this running in my project.

Speaking of Windows, which i work on, i see that you use reinterpret_cast but does not specify type to cast into ?

That syntax does not work for my compiler.

It works for any type that is defined (limitations of the cast itself ignored). If I used it without adding a type, please point out where I have made such a mistake and it will either be corrected or explained.

L. Spiro

In your previous post, looks like this site is eating brackets ( less-greater brackets).

I’ve seen a few posts that look that way during editing but not on the main site. You will have to provide a specific example.

L. Spiro

Look for your reply above with reinterpret_cast(&ui64Ret) line. I see no brackets where type should be.

I see. On replies I don’t seem to have editorial access.

The type part of “reinterpret_cast” is “LARGE_INTEGER *” in that case.

L. Spiro

Timer as you said above:

Time::Time()

: currTime(0ull), lastTime(0ull)

{

}

…

void Time::update()

{

auto timeNow = getRealTime();

auto diff = timeNow – lastTime;

lastTime = timeNow;

updateBy(diff);

}

void Time::updateBy(uint64 t)

{

currTime += t;

}

Then game update:

static auto updatedTime = globalTime.get(); // return currTime

auto curTime = globalTime.get();

while ( curTime – updatedTime > 33333ULL )

{

pSceneManager->update(33333ULL);

updatedTime += 33333ULL;

}

My sprite update (in scene manager update):

void Sprite::update(uint64 dt)

{

float t = ??? HERE WHAT????

D3DXVECTOR2 lPos;

D3DXVec2Lerp(&lPos, &pos, &lastPos, t);

pos = lPos;

…

}

float t = dt * (1.0f / 1000000.0f);

L. Spiro

Thank you very much.

I see now that ‘t’ is always constant?

Can i just calculate it once and keep it timer class and pass that to scene manager update?

Not if you ever want to change that value at run-time, which Perfect Dark does. Think about all possible use-cases before deciding on an action.

L. Spiro

I can’t get this right, if i enable vsync (while without fps is 3000+) movement is slower and jerky, it should be at the same speed.

I update input only once to move my sprite:

if(input->keyPressd( someKey ) {

vec2 oldPos = sprite->getPos(); // return pos

vec2 newPos = oldPos + dir * moveSpeed;

sprite->setPos(newPos);

}

and then i call update within above loop:

void Sprite::update(uint64 dt)

{

float t = dt * (1.0f / 1000000.0f);

D3DXVECTOR2 lPos;

D3DXVec2Lerp(&lPos, &pos, &lastPos, t);

pos = lPos;

…

}

then after that loop i update vertices and set lastPos:

void Sprite::commitVertices()

{

lastPos = pos;

vertex[0].pos = pos;

vertex[1].pos = pos + D3DXVECTOR2(dim.x, 0.0f);

…//and so forth

}

and after that draw calls are made.

Thank you for your time.

I don’t see where the error is and it likely requires a debugger to find it.

Additionally, why are you updating vertices manually? A vertex buffer almost never needs to be updated on the CPU.

L. Spiro

Is frequency needed to be calculated every frame?

My current class is the following:

class Time

{

public:

Time();

double GetDeltaTime();

private:

LARGE_INTEGER frequency;

LARGE_INTEGER t0;

};

It should only be calculated once and it can be a static value, but implementations are simpler if they are just regular members.

L. Spiro

Do I really need convert to float every time on my Actor->Update(UINT64 dt) function?

That is a bit expensive. This implementation is meant to be comprehensive. What you should do is either pass the actual CTime object I showed here, which should determine the float value once on each update, or make a simple MICROSECOND structure which stores the float value once each time its microsecond value is updated. It could provide either a method to access it (.AsFloat()), a cast operator, or both.

L. Spiro

Do you mean a pointer to the Actor class?!

#include “Time.h”

#include

Time::Time() : frequency(0ULL), currTime(0ULL), deltaTime(0.0f)

{

if ( QueryPerformanceFrequency( reinterpret_cast(&frequency) ) == -1 )

{

throw TimeException();

}

}

void Time::Update()

{

QueryPerformanceCounter( reinterpret_cast(&currTime) );

}

Actor::Actor() : pTime(0)

{

}

void Actor::Update()

{

//do something with pTimer->GetCurrMicroSec()

}

I am talking about here: http://lspiroengine.com/?p=351

CState has a pointer to CGame. CGame has the main CTime object and CState has a (or as many as it wants) CSceneManager instance.

CSeneManager::Tick( const CTime * )The scene manager is responsible for updating all actors, so it passes the time to all of them.

CActor::Tick( const CTime * )CPhysics::Tick( const CTime * )Etc.

L. Spiro

You are saying that CState has a Scene instance. Do you really mean this? CGame has a Scene* pScene or a CState can have objects? Isn’t the responsabiblity of a CState just control the flow of a CGame ?!

And for instance, if my CState is a CSceneTransition, it’ll have a CTime instance or variables to accumulate time and trigger some condition?

I saw in your post that a CState class has a CTime varible but, CGame has a Main Time variable.

Doesn’t make sense call:

CGameState::Update(Game* _pGame)

{

this->time.Update();

}

for me makes more sense to cal:

CGameState::Update(Game* _pGame)

{

CTime* pTime = _pGame->GetTime();

this->t += _pGame->GetFixedMicroSec();

if ( t > 1000000ULL )

{

t = 0ULL;

StateTransition* pStateTransition…

_pGame->Queue( pStateTransition );

}

}

Yes, the state has the scene manager.

A scene manager is just an instance of a game world. A state can create as many as it needs. That means the main menu has no scene manager.

This is exactly why you don’t put scene managers on the game class—by doing so you have 1 and exactly 1 scene. If you need 0 scenes then you are wasting memory. If you need more than 1 scene you are out of luck.

CGame runs the entire game. It is thus not logical to put a scene manager there, as most games are made of many states but only 1 or 2 that actually have scenes.

Logic for gameplay is handled by the state class, not the game class. Hence it only makes sense that the scene managers are created by the states, not the game.

L. Spiro

Another thing… if I’m not using the CPU Frequency (QueryPerformanceFrequency), why I’m getting it on the Time’s constructor?!

There is no other way to know how to convert from ticks to seconds. You are required to use it.

L. Spiro

class Gameplay : public GameState

{

public:

virtual void Init(Game* _pGame);

virtual void Destroy(Game* _pGame);

virtual void Update(Game* _pGame);

virtual void Draw(Game* _pGame);

private:

std::vector scenes; //Or pointers

Character character;

};

Another thing… I’ve changed the Actor’s Update function and I’ve lost some frames .

.

I think is that I’m passing the Time around the high level classes that make the Game or I just forgot to pass a reference at some point.

I’m not returning the UINT64 value as a reference because it takes more time to de-reference a primitive instead of creating a new instance. Do you think that this is the main cause of my problem?

e.g.

UINT64 GetTime() const { return curTime; } // I’m using

const UINT64& GetTime() const { return curTime; } // To be used

UINT64 is a large data to be passed as a copy?

It should not make such a difference. You can try using a reference but the problem is likely elsewhere.

L. Spiro

You forgot to say that QueryPerformanceFrequency is optional.

You only use it convert the value into seconds.

In the way you’re doing (accumulating time in microseconds), you’ll probably don’t need it.

QueryPerformanceFrequency() is not optional. I do not accumulate time in microseconds, I accumulate time in ticks. Anything else causes drift. QueryPerformanceFrequency() is necessary for the conversion from ticks to microseconds, milliseconds, etc.

L. Spiro

Do you mean…

float Time::GetCurSec() const

{

return curTime / frequency; // Microseconds to seconds.

}

Actor::Update(const Time& _time)

{

curTicks++;

fSec = _time.GetCurSec();

}

My current update function…

Actor::Update(const Time& _time)

{

curTime += _time.GetFixedMicSec(); // Returns 33333ULL.

}

And if you switch from accumulating fixed time to fixed ticks (in the updated by) and doing the conversion when necessary?

I mean, the system time (one instance of Time) accumulates variable ticks and the simulation time (another instance of Time) accumulates fixed ticks instead of microseconds.

Is that makes sense? I just brainstormed you.

Hello. And Bye.

Hey i implemented this in my vulkan engine but i have one question.

1.) What if i want to teleport objects straight from A to B? With this approach the positions gets interpolated and the objects moves pretty fast from A to B. How do i solve this in an easy manner?

Greetings

SH

This is a common problem but is easy to fix.

Just add a function that sets both the current and last position to the new location.

L. Spiro

Thanks for the fast response this was exactly what i assumed.

this was exactly what i assumed.

Another question, which i encountered today:

Before this system i rendered my shadowmaps in the interval where i updated my objects (e.g. 60x per second). With the interpolation system this gives me ugly artifacts, so changed it to generate them every frame (rendering the shadow-map with the interpolated values aswell). Whats bothering me is that this has a huge performance impact (i lost like 3ms). Is there another solution for this?

Greeting

SH

Shadow maps must be updated once per frame.

To make them faster, employ other techniques specifically for shadow maps, such as caching all static objects and only rendering into them dynamic objects.

L. Spiro

Welp the performance problem comes from the two-pass gaussian blur i have implemented to get soft-shadows. (I am using Variance-Shadow-Mappping). Without that rendering the shadow-maps every frame is actually acceptable. Beside that i am curious how do you do your rotation interpolations. I’m using quaternions and use LERP, but that gives me a problem at 120° where the signs from the components of the quaternion changes.